After two years OpenAI finally gets UX right with Canvas

Finally, OpenAI has made a real breakthrough in user experience, after two years of questionable updates.

OpenAI launched two features recently: one undercooked and one that might just be the future of AI. The contrast between Memory and Canvas shows a major issue that many startups face—rushing features out the door—and why it’s worth taking the time to do things right.

Moving too fast with Memory.

A big reason many startups fail is they rush to release features. Speed is a good thing—it lets you test ideas and learn fast—but when speed trumps quality, you end up with features that feel half-baked. OpenAI’s Memory seems to fall into this category. It doesn’t mean the idea is bad; it just needs more work to reach its true potential.

This is important because OpenAI's main revenue comes from monthly subscriptions. You'd expect them to build a solid product around the core to protect it from competitors—like the motto, 'come for the model, stay for the product.' But right now, it feels like they're still relying mostly on the model and their brand.

Even after a few years, these remain their primary barriers. ChatGPT grew faster than any product in history, but as a product, it still lacks depth. The technical foundation is solid—the model is powerful, fast, and relatively cheap—but is that enough? I don’t think so. With switching costs being nearly zero, there needs to be a protective layer to keep users from canceling their subscription after every competitor release.

Memory is supposed to make the model feel like it knows me, a bit like how TikTok magically seems to understand your taste. The idea is that the longer you use it, the better it tailors its responses to you. But my experience has been different—it’s like a random collection of facts, with no context or true understanding. It remembers that I own Nike running shoes or that I want to learn how to fry beef, but there’s no coherence or depth. You can check what's in your Memory file here.

The real value, the kind that makes you say, “Wow, I never want to switch to another tool,” is missing. The current version of Memory feels like an early draft—lacking that magic touch that turns functionality into something irreplaceable.

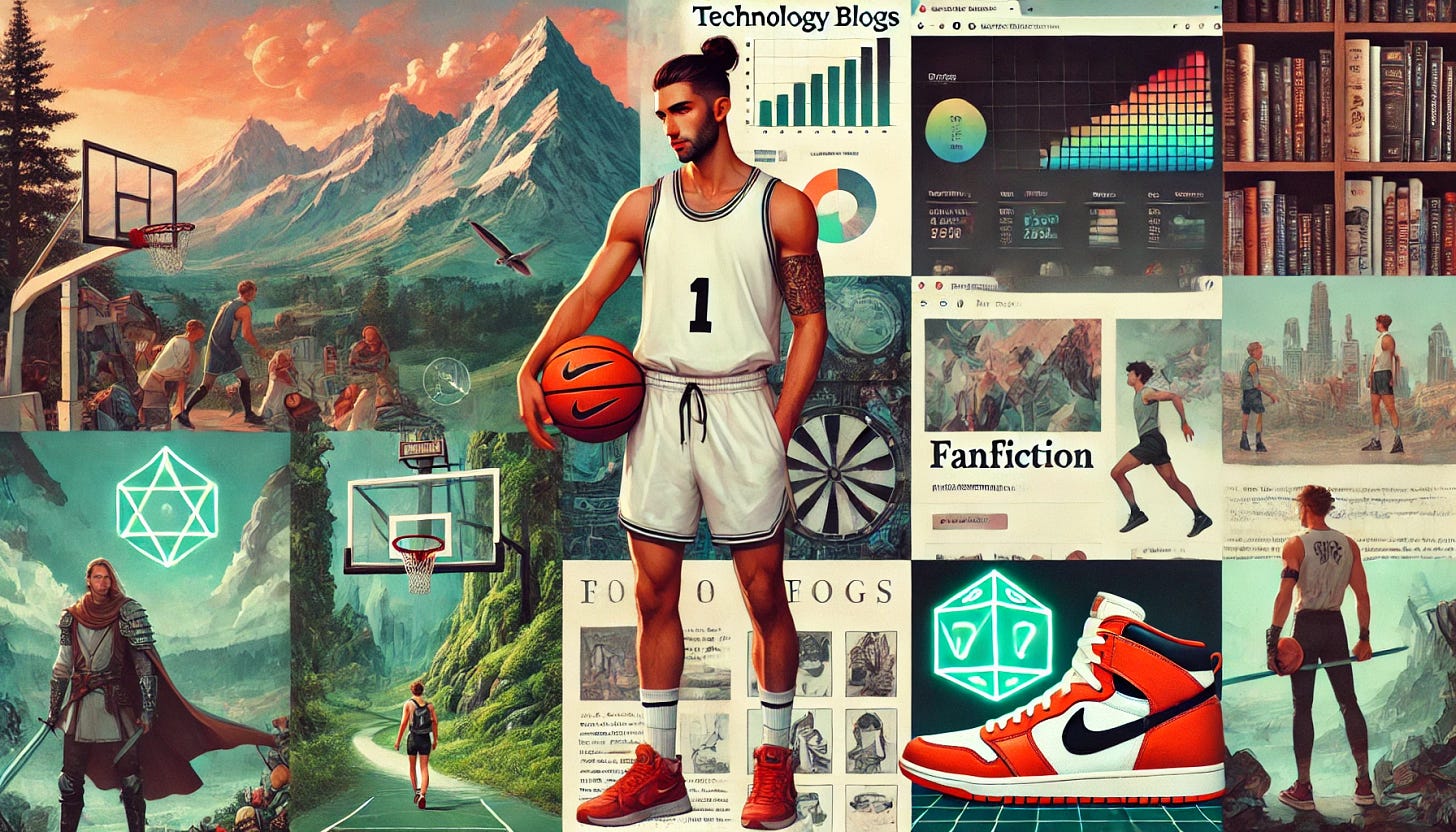

Before we dive into the part where I praise their recent beta feature, let's have a bit of fun. Try asking ChatGPT to draw you based on what's in your Memory—you might be surprised by the results or reminded why Memory needs more work:

Please paint my picture based on our communication. Be creative. Use as many facts as you can from Memory. Make a collage of smaller images for topics that don't fit together well. Try to avoid repeating similar ideas.

And then there’s Canvas.

Just when I was starting to doubt OpenAI’s product strategy, they rolled out Canvas—their take on Anthropic’s Artifacts—and it’s impressive. This isn’t just another chat interface; it’s something that feels truly native to the AI experience, much like Instagram was to mobile when it launched.

Think about when Instagram first came out. The combination of a device you always have with you, a camera, and casual photo filters created a new kind of experience—something that wasn’t possible before.

Historically, the emergence of such apps has reshaped how we solve problems. Think of Booking.com during the internet era or Uber with mobile technology. That’s why I’m interested in how interfaces and devices will evolve around AI. What will the next 'native' experience bring us?

Instagramm wasn’t just a camera app; it was built for mobile in a way that made it feel new and different. Canvas has that same vibe. It takes the usual AI chat and turns it into something more—an interactive workspace. You can make edits, add context, undo changes, ask for help refining a paragraph—it’s not just chat anymore, it’s collaboration. It’s like being a sculptor with a robotic chisel, guiding it to make precise changes as you go.

Okay, that might sound a bit poetic. Here's the specific stuff I liked the most:

You can edit a selected chunk of text directly, no need to redo everything.

You can ask the model to make edits by explaining your thoughts.

There’s an "undo/redo" feature that makes experimenting easier.

You can ask to find proof for a mentioned fact.

A button to rewrite with a different language level (like expert or student).

It can fix formatting, do a code review or fix a bug.

These features make Canvas feel like the first true evolution of how we use AI day-to-day. It’s like someone finally understood the frustration points of using a simple chat interface and went, “Okay, let’s fix this.” It’s still early days, but it’s already more useful than the bare-bones Memory feature.

There are many great examples from Karina Nguyen on X.

So...

I get it—OpenAI is growing fast and wants to deliver an MVP of new features quickly. But there’s a fine line between being fast for the sake of speed and building something genuinely valuable. You should focusing not just on “what else can we launch,” but “how can we make our features truly irreplaceable?”

Memory felt like a rushed experiment. Canvas, on the other hand, feels like a step in the right direction. In my personal opinion after using it—it's pure magic. Like someone there really seems to understand me.